Kubernetes provides mechanisms for defining your own criteria for container health, which it will then monitor for you. While this is happening, it will also automatically adjust the networking to make sure no new traffic is forwarded to the unhealthy containers. Similarly, if a container exits prematurely, Kubernetes will notice and reschedule it to reach the previous level of availability.

If a container fails to start properly, Kubernetes will try to start it again. Kubernetes takes responsibility for knowing about and responding to changes in the health of your containers.

This type of flexibility provides a good balance between hands off automatic selection and fine-grained control to run containers how you’d like. They can also influence the scheduling by changing the properties associated with specific nodes to increase or decrease the likelihood of certain types of containers being assigned to the individual node. Users can influence the scheduling decisions by providing additional requirements when deploying containers to the cluster. Kubernetes tries to automatically select the best node for containers based on the containers’ requirements and the current status and resources available on individual nodes. In this context, “schedule” means selecting an appropriate node, passing off the task to it, and then reacting if health checks indicate that the workload needs attention.

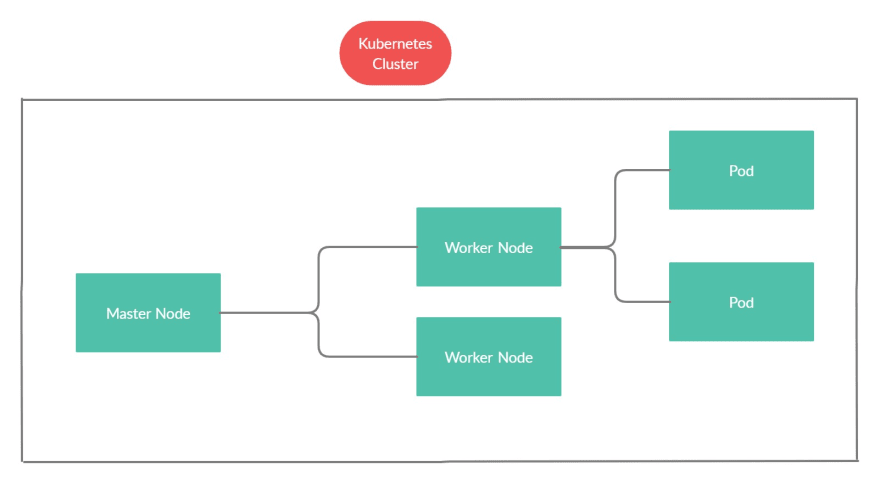

One of the fundamental capabilities that Kubernetes provides is the ability to schedule containers on individual nodes based on given requirements. Let’s take a look at some of the most important ones. Kubernetes offers a wide range of features that help it achieve production viability. From there, Kubernetes continued to gain widespread adoption as it matured and its ecosystem ballooned with countless projects seeking to enhance the platform. After hitting version 1.0 in 2015, Google and the Linux Foundation partnered to create the Cloud Native Computing Foundation as a steward for Kubernetes and like-minded open-source projects to ensure that the community would maintain ownership and control over Kubernetes. Kubernetes immediately captured the interest of the container community due to its well-defined primitives, robust architecture, and a design proven to be able to handle enormous workloads. In 2014, after transitioning internally to a new system, Google released Kubernetes as an open-source version of Borg. It grew out of an internal Google cluster system called Borg which was used to operate and scale workloads across the organization starting in 2003. Kubernetes is, by far, the most popular and feature rich container orchestration system in the world. Container orchestrators take on a core subset of these requirements to provide additional functionality. Furthermore, while running containers on hosts is certainly a central requirement of running production-ready containers, there are many other requirements that need to be addressed. While runtimes are able to manage containers on a single host, they don’t often provide adequate methods of scaling. In short, container orchestration systems are designed to create a complete management environment that takes care of the hard work of operating containers with production requirements.Ĭontainer orchestration grew out of the need for an additional layer of management above individual container runtimes. These systems coordinate with container runtimes to manage the life cycle of containerized workloads and operate an entire supporting environment for containers to create a scalable platform for deployment.

WHAT IS KUBERNETES SIMPLE EXPLANATION SOFTWARE

Container Orchestration and the Birth of KubernetesĬontainer orchestration is a term for software that allows operators to run groups of containers across multiple hosts. In this guide, we’ll talk about how Kubernetes came to be, introduce some core Kubernetes concepts, and explore how container orchestration platforms help turn containerized applications into robust, highly scalable environments for modern development. But what is Kubernetes and how does it work?

Kubernetes, a container orchestration system, has become the overwhelming standard for managing complex container workloads in production environments. As technologies like Docker and containerization have become indispensable parts of developer and operations toolkits and gained traction in organizations of all sizes, the need for greater management tools and deployment environments has increased.